Strategic intelligence

OBJX Intelligence

2024 - 2025

Platform health score

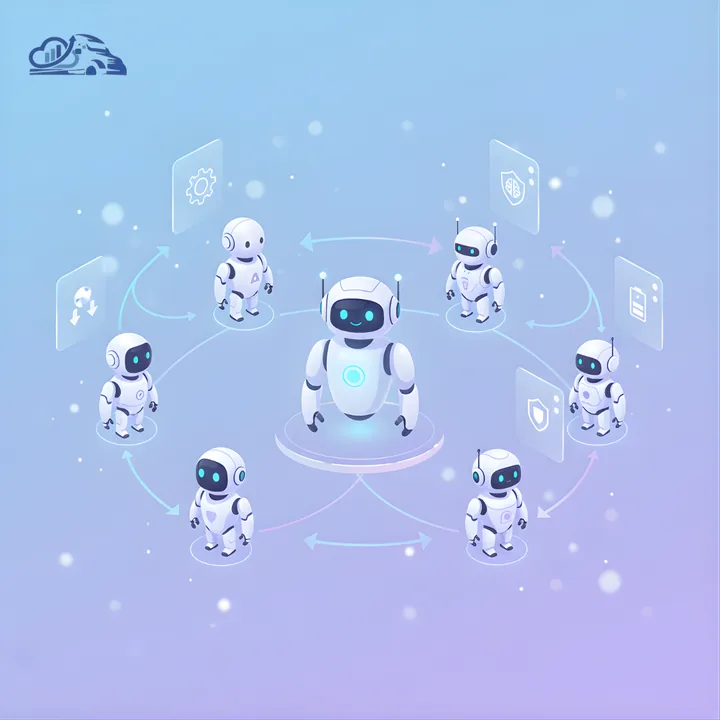

Named agents x tiers

Enterprise agent-orchestration platform built around a proprietary Trinity Architecture and X + Y = Z methodology. Van Data Team shipped named agents, a five-tier permission model, billing controls, and production deployment for a multi-tenant intelligence workflow.