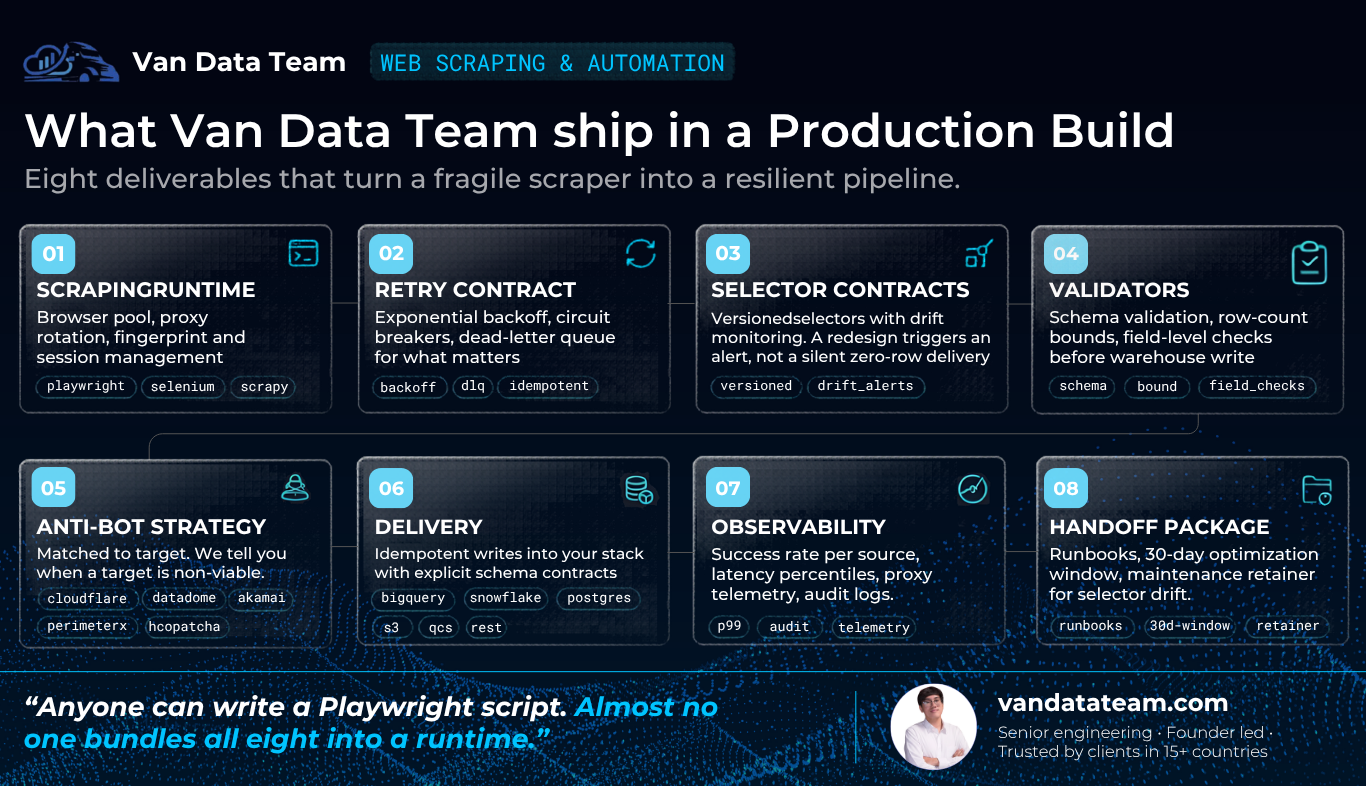

The reason scrapers fail in production isn't the code. It's the absence of those layers. A senior engineer can write a working Playwright script in an afternoon. Almost no one bundles all seven into a runtime that survives a site redesign, an anti-bot upgrade, and a proxy IP burn in the same quarter.

Web Scraping & Automation services:

cost, scope, and how to hire

The honest cost to hire production-grade web scraping services in 2026 ranges from $500–$2,000 one-time at a founder-led boutique like Van Data Team — scoped per project, with proxies, compute, and selector maintenance billed only when used. The same scope at a US agency runs $25K–$120K plus $5K–$25K/month. Managed APIs (Bright Data, Apify, ScrapingBee) win for public, moderate-volume targets and lose on cost the moment you cross 200K pages/month or need custom delivery into a governed warehouse.

Van Data Team is a Vietnam-based, founder-led engineering practice that ships Playwright-first scraping runtimes, browser pool, proxy rotation, selector contracts, validators, and structured delivery into BigQuery, Snowflake, Postgres, or S3. Not a freelancer who disappears after the first selector breaks. Not a per-page meter that turns into a $15K/month line item at scale.

Key takeaways for buyers

Engagement model

Free Strategy Sprint Consultancy to define the scope, Production Build with custom fixed-bid pricing per project, and an Embedded Partner retainer for ongoing maintenance.

Stack

Playwright-first runtime with selector contracts, dead-letter queues, validators, and audit logs. Delivery into BigQuery, Snowflake, Postgres, S3, or REST.

Timeline

2–6 weeks kickoff to production for one to five sources. Faster on plain HTML, longer for Cloudflare, DataDome, or login-gated targets.

When not to hire us

If targets are public, volume is moderate, and an API-shaped delivery is fine, a managed API like Apify or Bright Data is the right call. We say so on the first call.

What is Web Scraping & Automation, in production terms?

Web Scraping & Automation is the production runtime that extracts public web data at scale, validates it against a schema, and lands it in your warehouse on a recurring SLA. It is not a one-off Python script. It is browser pool, proxy rotation, selector contracts, retry plus dead-letter queues, validators, and observability, stitched together so the data keeps flowing past month three.

Our 1M+ pages/month enterprise scraping case study runs at 99.9% extraction success with structured delivery into BigQuery. The patterns below are the ones that survived production.

What we ship in a Production Build

Each of these eight layers maps to a failure mode we've seen recur across 150+ engagements. The result: 99.9% extraction success at 1M+ pages/month, with resilient web scraping that survives site redesigns, anti-bot updates, and proxy IP burns past month three.

Managed API vs freelancer vs US agency vs Van Data Team

A serious vendor names where they lose. Managed APIs win for hands-off public targets. Van Data Team wins in the messy middle: anti-bot pressure, custom logic, higher volume, and warehouse-grade delivery.

| Decision factor | Managed API | Typical freelancer | US agency | Van Data Team |

|---|---|---|---|---|

| Public targets, simple, moderate volume | Strong fit | Acceptable | Overkill | Overkill |

| Anti-bot sophisticated, recurring SLA | Hits cost ceiling | Breaks in week 4 | Strong fit | Strong fit |

| 1M+ pages/month with custom logic | Cost-prohibitive | Not realistic | Strong fit | Strong fit |

| Custom delivery into governed warehouse | Limited | DIY scripts | Native | Native |

| Pricing model | Per-page meter | Hourly, often unscoped | Six-figure retainers | Fixed-bid by scope |

| Time-to-PoC | Hours | Days | 4-8 weeks | 2-4 weeks |

| Accountability when scraper breaks | Vendor SLA | Often none | Account manager | Founder named in contract |

Managed APIs win for hands-off, public, moderate-volume targets. Freelancers win for short experiments. US agencies win when procurement needs a name brand and a six-figure budget. Van Data Team wins in the messy middle: mid-market teams with anti-bot pressure, custom logic, or volumes that exceed managed API limits and need warehouse-grade delivery.

Why scripts fail in production and what a runtime closes

Most production failures aren't coding bugs. They're architectural gaps. Four predictable failure modes show up across every post-mortem and rescue engagement we've taken.

Silent data loss

Symptom: a scraper runs successfully but returns zero rows after a site redesign. The warehouse table goes stale and a downstream dashboard quietly lies. Root cause: hard-coded selectors with no versioning and no drift monitoring. Fix: versioned selector contracts with per-source success-rate baselines. A drop below 95% triggers a structured alert before the table goes stale. Selectors are regression-tested on every deploy.

Exploding proxy costs

Symptom: proxy bills triple unexpectedly, or success rates collapse as datacenter IPs get flagged on fingerprint-heavy sites. Root cause: wrong proxy class for the target, plus uncontrolled retry loops burning residential bandwidth. Fix: proxy class matched on Day 1, residential for fingerprint-heavy sites, datacenter for permissive high-volume scrapes. Per-source retry quotas. Proxy-bill telemetry on the same dashboard as success rate, so a cost spike is visible the same hour it starts.

Confidently wrong data

Symptom: the scraper returns rows and writes garbage. A selector quietly matches the wrong element, a pagination loop stops one page early, and the dashboard shows confident wrong numbers. Root cause: no validation before warehouse delivery. Fix: four pre-write validators (schema, row-count sanity bounds, field-level type and format, per-source freshness). Failures route to a dead-letter queue with structured feedback, never a silent write.

Wrong action executed (automation)

Symptom: a “working” automation submits a form twice, pays the wrong invoice, or marks the wrong record as processed. Root cause: no idempotency, no audit trail, no human checkpoint. Fix:every action guarded by a uniqueness key so a re-run can't double-submit. Timestamped audit log of every click, input, and response. Mandatory human-in-the-loop checkpoints on financial, communicative, and irreversible steps. Dry-run mode on every deploy.

Native scripts optimize for the first run. Custom web scraping services optimize for month four. That's the gap a Production Build closes.

How an engagement runs

Pricing is custom per project, anti-bot pressure, volume, targets, and delivery shift with every build. A flat menu would over-charge the simple ones or under-scope the complex ones, so we publish the path instead:

Strategy Sprint — Free

30-minute discovery call plus async follow-up. You walk away with a target source map, anti-bot assessment, runtime architecture, and a fixed-bid quote for the Production Build, whether or not we end up shipping the build.

Production Build, custom fixed-bid, scoped on the Sprint

End-to-end delivery: scraper, proxy strategy, validators, warehouse delivery, monitoring, runbook. 2–6 weeks for one to five sources. 30-day post-launch optimization window. Most builds are scoped per project, fixed-bid after the Sprint, and dramatically below comparable US agency scope.

Embedded Partner, retainer, custom monthly scope

Selector maintenance, anti-bot upgrades, source additions, dashboard upkeep, incident response. This is what keeps scrapers above 99% success past month three.

The same senior engineer runs all three phases. No handoff drift, no junior bench. Strongest fit: market intelligence, e-commerce, B2B research, real estate, travel, and AI / RAG retrieval pipelines that need clean structured JSON or Markdown with provenance.

Ongoing line items to budget alongside the build:

Residential proxies (charged by your proxy provider, not us), browser compute on Cloud Run or GKE, and a maintenance retainer to absorb selector drift and anti-bot updates. We size all three on the Sprint so you can plan the calendar and budget end-to-end.

150+ Companies Have Chosen VANDATATEAM

VanDataTeam is proud to partner with 150+ leading companies across 15+ countries, building lasting success through production-grade AI Agent systems and data engineering expertise.

Ahrvo

Client

Athenahealth

Clarify IQ

Cleargen

Conversion Finder

Debit My Data

Ellipsis Earth

Client

Finance Scaler

Forskningslogen Friederich Munter

HBC

Hello Alma

Hudson's Bay Company

Kejora

Kiki AI

Lunada

OBJX

Praxis AI

Client

Rarity Capital

Re Talk Py

Setmore

Stock Exploit

Supply Bridge

Thrive 5 IR

Voodoo

Client

Wajooba

White Ribbon Alliance

With Words

You Heal

Ahrvo

Client

Clarify IQ

Conversion Finder

Ellipsis Earth

Client

Forskningslogen Friederich Munter

Hello Alma

Kejora

Lunada

Praxis AI

Client

Re Talk Py

Stock Exploit

Thrive 5 IR

Wajooba

With Words

Athenahealth

Cleargen

Debit My Data

Finance Scaler

HBC

Hudson's Bay Company

Kiki AI

OBJX

Rarity Capital

Setmore

Supply Bridge

Voodoo

Client

White Ribbon Alliance

You Heal

Ahrvo

Client

Cleargen

Ellipsis Earth

Client

HBC

Kejora

OBJX

Re Talk Py

Supply Bridge

Wajooba

You Heal

Athenahealth

Conversion Finder

Finance Scaler

Hello Alma

Kiki AI

Praxis AI

Client

Setmore

Thrive 5 IR

White Ribbon Alliance

Clarify IQ

Debit My Data

Forskningslogen Friederich Munter

Hudson's Bay Company

Lunada

Rarity Capital

Stock Exploit

Voodoo

Client

With Words

Companies That Achieved Breakthrough Growth With Web Scraping & Automation Services of Van Data Team

Production-grade scraping at 1M+ pages/month, 99.9% extraction success, anti-bot bypass on Cloudflare and DataDome. 15 case studies across e-commerce, real estate, B2B research, and AI training pipelines.

Challenge

A B2B platform needed structured company profiles at scale from Crunchbase and LinkedIn with no manual operator intervention.

What We Did

Built three Docker microservices on CapRover, with a Crunchbase worker, LinkedIn worker, remote Selenium Grid, account rotation, and DB-driven parameters for cookies, proxies, and payloads.

Key Results

- 3 isolated microservices deployable independently via CapRover

- Selenium Grid plus parallel Chrome and Edge containers

- Zero-code reconfiguration through a database parameter table

- Authwall auto-recovery for long-running scraping sessions

What clients say about our Web Scraping & Automation Services

Van Data Team builds production-grade Web Scraping runtimes that feed your warehouse: BigQuery, Snowflake, Postgres, or S3. Playwright-first browser pool, selector contracts with drift monitoring, pre-write validators, anti-bot strategy matched to the target, and observability with proxy-bill telemetry. The same senior engineer who scopes your build also hardens it in production.

Verified review signal

5.0

71 reviews on Upwork

Chod S.

5.00February 25, 2026

Data Extraction & Automation Engineer for Large Document Repository

Verified rating captured from the shared Upwork review screenshots.

Chris K.

5.00December 30, 2025

FT Platform Phase #2

"Great backend developer, highly recommend!"

Gilad B.

5.00December 18, 2025

Phase 0: Design a granular data schema and structure, and full tool flow

"Very knowledgeable and professional. Good communication"

Chris K.

5.00December 5, 2025

BQ Pipeline Automation + Lightweight API

Verified rating captured from the shared Upwork review screenshots.

Ari B.

5.00November 25, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Tomer S.

5.00September 9, 2025

Data handling

Verified rating captured from the shared Upwork review screenshots.

Julio D.

5.00July 16, 2025

Web scraper in R

"Tran was great, very knowledgeable and quick responses"

Jason Y.

5.00June 13, 2025

Flowise N8N AI Agent Builder

Verified rating captured from the shared Upwork review screenshots.

Adam P.

5.00May 26, 2025

scrape data for research project

Verified rating captured from the shared Upwork review screenshots.

Dillon M.

5.00May 16, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Bernard V.

5.00May 12, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Preska T.

5.00April 10, 2025

LLM

Verified rating captured from the shared Upwork review screenshots.

Madison M.

5.00March 31, 2025

Review Git Pull Requests

"Very responsive and quick to get started! Produced excellent results. I will definitely reach out again in the future."

David C.

5.00March 29, 2025

You will get AWS, GCP and Azure Data pipeline

"Great platform to interface with developer."

Alex J.

5.00February 18, 2025

You will get Data Scraping | Data Extraction | Web Scraper | Automation Tools

"Fast, responsive, professional. Really appreciated the thorough documentation too."

Tomer S.

5.00December 12, 2024

Create Web scraper for Facebook

"Van exceeded all expectations with exceptional professionalism and expertise. They delivered high-quality work ahead of schedule, communicated effectively throughout the project, and made the collaboration seamless and enjoyable. I highly recommend Van to anyone looking for a skilled and reliable freelancer."

Preska T.

5.00October 21, 2024

You will get Data Scraping | Data Extraction | Web Scraper | Automation Tools

"I recently had the pleasure of working with Tran, and I can't express enough how impressed I am with his work. From the very beginning, he demonstrated a deep understanding of our project requirements and brought a level of expertise that made a significant difference in the outcome. What truly sets Tran apart is his commitment to excellence."

Alice W.

5.00June 28, 2024

Data Pipeline

Verified rating captured from the shared Upwork review screenshots.

Alice W.

5.00May 26, 2024

Admin Panel for Data Management

"AMAZING. We are lucky that we found Van. He helped us with our database structure. He is very knowledgeable and very cooperative. We are still continuing to work with him further. I can only highly recommend."

Nic S.

5.00May 15, 2024

Web scraping - Project review and proposal

"Excellent work. I'd be very happy to work with Tran in the future."

Paris K.

5.00March 22, 2024

Seeking developers experienced with LAMP (Python) and REST APIs for UX Research Study / gstd-2024-1

"Tran did a great job on a LAMP REST API deployment to Microsoft Azure. We'd be happy to work with this freelancer again."

Yalcin K.

5.00March 18, 2024

Python Developer to Build a Shopify Integration

"Van is a great data engineer, and I highly recommend it. He joined our project and helped build a custom data pipeline within weeks."

Omer D.

4.80March 5, 2024

Attach Stripe webhook to Flask server

Verified rating captured from the shared Upwork review screenshots.

Chod S.

5.00February 25, 2026

Data Extraction & Automation Engineer for Large Document Repository

Verified rating captured from the shared Upwork review screenshots.

Gilad B.

5.00December 18, 2025

Phase 0: Design a granular data schema and structure, and full tool flow

"Very knowledgeable and professional. Good communication"

Ari B.

5.00November 25, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Julio D.

5.00July 16, 2025

Web scraper in R

"Tran was great, very knowledgeable and quick responses"

Adam P.

5.00May 26, 2025

scrape data for research project

Verified rating captured from the shared Upwork review screenshots.

Bernard V.

5.00May 12, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Madison M.

5.00March 31, 2025

Review Git Pull Requests

"Very responsive and quick to get started! Produced excellent results. I will definitely reach out again in the future."

Alex J.

5.00February 18, 2025

You will get Data Scraping | Data Extraction | Web Scraper | Automation Tools

"Fast, responsive, professional. Really appreciated the thorough documentation too."

Preska T.

5.00October 21, 2024

You will get Data Scraping | Data Extraction | Web Scraper | Automation Tools

"I recently had the pleasure of working with Tran, and I can't express enough how impressed I am with his work. From the very beginning, he demonstrated a deep understanding of our project requirements and brought a level of expertise that made a significant difference in the outcome. What truly sets Tran apart is his commitment to excellence."

Alice W.

5.00May 26, 2024

Admin Panel for Data Management

"AMAZING. We are lucky that we found Van. He helped us with our database structure. He is very knowledgeable and very cooperative. We are still continuing to work with him further. I can only highly recommend."

Paris K.

5.00March 22, 2024

Seeking developers experienced with LAMP (Python) and REST APIs for UX Research Study / gstd-2024-1

"Tran did a great job on a LAMP REST API deployment to Microsoft Azure. We'd be happy to work with this freelancer again."

Omer D.

4.80March 5, 2024

Attach Stripe webhook to Flask server

Verified rating captured from the shared Upwork review screenshots.

Chris K.

5.00December 30, 2025

FT Platform Phase #2

"Great backend developer, highly recommend!"

Chris K.

5.00December 5, 2025

BQ Pipeline Automation + Lightweight API

Verified rating captured from the shared Upwork review screenshots.

Tomer S.

5.00September 9, 2025

Data handling

Verified rating captured from the shared Upwork review screenshots.

Jason Y.

5.00June 13, 2025

Flowise N8N AI Agent Builder

Verified rating captured from the shared Upwork review screenshots.

Dillon M.

5.00May 16, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Preska T.

5.00April 10, 2025

LLM

Verified rating captured from the shared Upwork review screenshots.

David C.

5.00March 29, 2025

You will get AWS, GCP and Azure Data pipeline

"Great platform to interface with developer."

Tomer S.

5.00December 12, 2024

Create Web scraper for Facebook

"Van exceeded all expectations with exceptional professionalism and expertise. They delivered high-quality work ahead of schedule, communicated effectively throughout the project, and made the collaboration seamless and enjoyable. I highly recommend Van to anyone looking for a skilled and reliable freelancer."

Alice W.

5.00June 28, 2024

Data Pipeline

Verified rating captured from the shared Upwork review screenshots.

Nic S.

5.00May 15, 2024

Web scraping - Project review and proposal

"Excellent work. I'd be very happy to work with Tran in the future."

Yalcin K.

5.00March 18, 2024

Python Developer to Build a Shopify Integration

"Van is a great data engineer, and I highly recommend it. He joined our project and helped build a custom data pipeline within weeks."

Chod S.

5.00February 25, 2026

Data Extraction & Automation Engineer for Large Document Repository

Verified rating captured from the shared Upwork review screenshots.

Chris K.

5.00December 5, 2025

BQ Pipeline Automation + Lightweight API

Verified rating captured from the shared Upwork review screenshots.

Julio D.

5.00July 16, 2025

Web scraper in R

"Tran was great, very knowledgeable and quick responses"

Dillon M.

5.00May 16, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Madison M.

5.00March 31, 2025

Review Git Pull Requests

"Very responsive and quick to get started! Produced excellent results. I will definitely reach out again in the future."

Tomer S.

5.00December 12, 2024

Create Web scraper for Facebook

"Van exceeded all expectations with exceptional professionalism and expertise. They delivered high-quality work ahead of schedule, communicated effectively throughout the project, and made the collaboration seamless and enjoyable. I highly recommend Van to anyone looking for a skilled and reliable freelancer."

Alice W.

5.00May 26, 2024

Admin Panel for Data Management

"AMAZING. We are lucky that we found Van. He helped us with our database structure. He is very knowledgeable and very cooperative. We are still continuing to work with him further. I can only highly recommend."

Yalcin K.

5.00March 18, 2024

Python Developer to Build a Shopify Integration

"Van is a great data engineer, and I highly recommend it. He joined our project and helped build a custom data pipeline within weeks."

Chris K.

5.00December 30, 2025

FT Platform Phase #2

"Great backend developer, highly recommend!"

Ari B.

5.00November 25, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

Jason Y.

5.00June 13, 2025

Flowise N8N AI Agent Builder

Verified rating captured from the shared Upwork review screenshots.

Bernard V.

5.00May 12, 2025

30 minute consultation

Verified rating captured from the shared Upwork review screenshots.

David C.

5.00March 29, 2025

You will get AWS, GCP and Azure Data pipeline

"Great platform to interface with developer."

Preska T.

5.00October 21, 2024

You will get Data Scraping | Data Extraction | Web Scraper | Automation Tools

"I recently had the pleasure of working with Tran, and I can't express enough how impressed I am with his work. From the very beginning, he demonstrated a deep understanding of our project requirements and brought a level of expertise that made a significant difference in the outcome. What truly sets Tran apart is his commitment to excellence."

Nic S.

5.00May 15, 2024

Web scraping - Project review and proposal

"Excellent work. I'd be very happy to work with Tran in the future."

Omer D.

4.80March 5, 2024

Attach Stripe webhook to Flask server

Verified rating captured from the shared Upwork review screenshots.

Gilad B.

5.00December 18, 2025

Phase 0: Design a granular data schema and structure, and full tool flow

"Very knowledgeable and professional. Good communication"

Tomer S.

5.00September 9, 2025

Data handling

Verified rating captured from the shared Upwork review screenshots.

Adam P.

5.00May 26, 2025

scrape data for research project

Verified rating captured from the shared Upwork review screenshots.

Preska T.

5.00April 10, 2025

LLM

Verified rating captured from the shared Upwork review screenshots.

Alex J.

5.00February 18, 2025

You will get Data Scraping | Data Extraction | Web Scraper | Automation Tools

"Fast, responsive, professional. Really appreciated the thorough documentation too."

Alice W.

5.00June 28, 2024

Data Pipeline

Verified rating captured from the shared Upwork review screenshots.

Paris K.

5.00March 22, 2024

Seeking developers experienced with LAMP (Python) and REST APIs for UX Research Study / gstd-2024-1

"Tran did a great job on a LAMP REST API deployment to Microsoft Azure. We'd be happy to work with this freelancer again."

Frequently asked questions

A custom Playwright build with proxy strategy, validators, and warehouse delivery is scoped per project at Van Data Team. Most one-to-five-source builds land between $500 and $2,000, fixed-bid after the free Strategy Sprint. Proxies and compute pass through to your providers; maintenance is scoped separately when needed.

Playwright is the default for modern JavaScript-heavy sites and anti-bot pressure. Scrapy is better for high-volume plain HTML where async throughput matters. Selenium still fits some legacy or grid-based automation cases. The framework should follow the workflow, not vendor preference.

Cloudflare-class targets require layered strategy: residential proxies where appropriate, modern browser automation, realistic timing, fingerprint management, and rate limits that respect the target. We disclose the planned approach during discovery and decline targets we cannot sustain.

Managed APIs win when targets are public, volume is moderate, business logic is simple, and API-shaped delivery is fine. Custom Web Scraping & Automation wins when anti-bot pressure is sophisticated, volume crosses 200K pages per month, login/session state is required, or the data must land in your warehouse with validated schemas.

Most one-to-five-source builds take 2 to 6 weeks from kickoff to production, plus stabilization. Plain HTML targets can be faster. Cloudflare-class detection, login-gated sources, or complex delivery contracts push the timeline longer.

Public data scraping is legal in many US, UK, EU, and Australian contexts when done at reasonable rates and with privacy obligations respected. Personally identifiable information requires a lawful basis under GDPR or CCPA. We scrape public data, treat robots.txt as a strong signal, and decline targets that require breaking authentication or paywalls.

Yes. We deliver structured JSON, Markdown, or warehouse rows with provenance fields such as source URL, fetch timestamp, selector version, and success-rate baseline, so downstream AI agents can cite and audit retrieved data.

Book 30-Minute Discovery Call

If your scraper drifted into broken selectors, your managed API bill crossed the build-vs-buy line, or you needed data flowing from anti-bot-heavy sources into a governed warehouse, the fastest next move is a free 30-minute Strategy Sprint. Low risk, no pitch.

Related Van Data Team service lines

AI Agent Development

Production LangGraph, CrewAI, OpenAI Assistants, and Claude tool-use workflows.

Data Pipeline Development

Airflow, Kafka, dbt, BigQuery, and freshness SLAs for the data behind automation.

Agentic BI & Reporting

Multi-agent reporting and narrative analytics on top of your governed warehouse.