April 16, 2026

Claude Opus 4.7: What Changed and When to Use It for Production AI Agents

Claude Opus 4.7 hits 64.3% SWE-bench Pro -- up 11 points from 4.6. What changed for production AI agents and the routing decision most teams miss.

Article focus

Opus 4.7 is a production upgrade, not just a benchmark chase. Here is what adaptive thinking, cross-session memory, and a 13% coding lift mean for teams running AI agents today.

Section guide

Claude Opus 4.7 is Anthropic's most capable generally available model, released today. It scores 64.3% on SWE-bench Pro -- an 11-point jump over Opus 4.6 -- and introduces adaptive thinking, cross-session memory, and vision processing up to 2,576px. For teams running AI agents in production, the upgrade is meaningful in ways that benchmark tables don't capture.

Most coverage of a new model launch reads like a press release. This is not that. What follows is a practical breakdown of what Opus 4.7 actually changes, where the benchmark numbers translate to real workflow improvements, and how to decide whether it belongs in your production stack today or in three months.

Key Takeaways

- Claude Opus 4.7 scores 64.3% on SWE-bench Pro, ahead of GPT-5.4 (57.7%) and Gemini 3.1 Pro (54.2%), and resolves 3x more production coding tasks than Opus 4.6 in real-world benchmarks.

- Adaptive thinking auto-scales compute based on task complexity -- Opus 4.7 thinks harder on hard problems and responds faster on simple ones, which matters for agents that mix routine tool calls with complex decisions.

- Cross-session memory lets agents carry context across sessions, which changes what's practical for long-running research, RevOps, and data pipeline workflows.

- The $5/$25 per million token pricing is unchanged from Opus 4.6, but prompt caching brings effective cost down by up to 90% for agents with repeated system prompts.

- Use Opus 4.7 for long-horizon agentic tasks, complex coding, and high-stakes decisions. Use Sonnet 4.6 for most standard agent steps. Use Haiku 4.5 for high-volume retrieval and classification.

What's new in Claude Opus 4.7

The four changes that matter operationally: adaptive thinking, cross-session memory, vision resolution, and coding performance.

Adaptive thinking

Opus 4.7 automatically adjusts how much computation it allocates based on task complexity. On a routine extraction or a straightforward classification, it responds quickly. On a multi-step code debugging task or a complex judgment call with conflicting signals, it allocates more internal reasoning before returning an answer.

For production agents, this reduces a common tradeoff. Previously, teams had to choose between Opus (thorough but slow on simple tasks) and Sonnet (fast but occasionally shallow on hard ones). Adaptive thinking makes Opus 4.7 better calibrated across that range.

The practical implication: workflows that mix low-complexity tool calls with occasional high-complexity decisions no longer need to split routing as aggressively to manage latency. Opus 4.7 handles the variance.

Improved long-horizon autonomy and cross-session memory

Opus 4.7 carries context across sessions. An agent running a multi-day research workflow can pick up where it left off, reference prior decisions, and update its understanding without the operator re-injecting context at the start of every run.

This matters most for three workflow types: autonomous research agents that brief teams weekly, support triage systems that track case history across interactions, and data pipeline agents that monitor and adjust behavior based on what broke last week. In all three cases, the prior model required either a manual context handoff or a context-injection step that added latency and cost. Opus 4.7 removes that bottleneck for supported memory patterns.

Vision upgrade: 2,576px resolution

Opus 4.7 processes images up to 2,576 pixels on the long edge -- more than three times the resolution of prior Claude models. For agents that analyze dashboards, parse screenshots of external systems, or review document scans, this closes a longstanding accuracy gap on detail-dense images.

Enhanced coding: SWE-bench Pro 64.3%

On SWE-bench Pro, Opus 4.7 scores 64.3% versus 53.4% for Opus 4.6. That is not a minor iteration. GPT-5.4 scores 57.7% and Gemini 3.1 Pro scores 54.2% on the same benchmark.

On SWE-bench Verified, the score is 87.6%, up from 80.8% on Opus 4.6. On Rakuten's internal SWE-bench evaluation -- which runs against real production codebases -- Opus 4.7 resolves three times as many tasks as Opus 4.6, with double-digit gains in both Code Quality and Test Quality scores.

On a 93-task internal benchmark, resolution rate lifted 13% over Opus 4.6, including four tasks neither Opus 4.6 nor Sonnet 4.6 could solve at all.

These are not marginal improvements on a leaderboard. They represent a real change in what an agent can accomplish on a realistic production codebase.

How Opus 4.7 benchmarks against GPT-5.4 and Gemini 3.1 Pro

The full benchmark comparison (source: SWE-bench Pro Leaderboard, Scale AI and Anthropic Claude Opus):

| Metric | Opus 4.7 | Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro | |---|---|---|---|---| | SWE-bench Pro | 64.3% | 53.4% | 57.7% | 54.2% | | SWE-bench Verified | 87.6% | 80.8% | -- | 80.6% | | Context window | 1M tokens | 1M tokens | -- | -- | | Input pricing | $5/M tokens | $5/M tokens | -- | $2/M tokens | | Output pricing | $25/M tokens | $25/M tokens | -- | $12/M tokens | | Prompt caching savings | up to 90% | up to 90% | -- | -- | | Max image resolution | 2,576px | ~800px | -- | -- |

A few things worth noting in the table.

Opus 4.7 leads on every coding benchmark that reflects production conditions. The Rakuten-SWE-Bench result -- 3x more resolved tasks on a real codebase -- is the number that matters most for teams evaluating whether to upgrade their coding agents. Benchmark scores on synthetic tasks are easy to game; production codebase resolution is not.

Gemini 3.1 Pro is cheaper at $2/$12 per million tokens versus $5/$25 for Opus 4.7. If budget is the primary constraint and coding accuracy is less critical, Gemini is a reasonable alternative. But for teams where a single miscoded pipeline or a failed agent step has material downstream cost, the accuracy gap justifies the price difference.

GPT-5.4 is a strong model, particularly on structured reasoning and computer use tasks. It does not lead on the benchmarks most relevant to AI agent development and data engineering workflows.

When to use Opus 4.7 vs Sonnet 4.6 vs Haiku 4.5

The most common mistake with model selection is routing everything through the strongest model to avoid thinking about it. That works for demos. In production, it creates unnecessary cost and latency on steps that don't need it.

A practical routing decision framework:

Use Opus 4.7 when:

- The task requires long-horizon reasoning or multi-step decision chains with dependencies

- An agent is writing, debugging, or reviewing production code

- A high-confidence decision gate needs to be accurate -- approvals, escalation routing, final output review

- Cross-session continuity matters and the workflow runs over multiple days

- The input contains complex visual artifacts like dense dashboards, schematics, or multi-page documents

Use Sonnet 4.6 when:

- The task involves standard tool calling, structured extraction, or moderate-complexity reasoning

- Latency is a visible constraint and the step does not require deep judgment

- The agent is executing a well-defined workflow with low ambiguity

- Cost per workflow completion matters at scale

Use Haiku 4.5 when:

- High-volume classification, retrieval, or summarization with clear schemas

- The task is narrow and the failure mode of a wrong answer is low-cost and recoverable

- You need fast, cheap execution across thousands of calls per hour

A research agent architecture that has worked well in recent production builds: Opus 4.7 for the planning step, competitor analysis synthesis, and final brief generation; Sonnet 4.6 for search query generation, source evaluation, and intermediate summarization; Haiku 4.5 for retrieval ranking and deduplication. That routing structure cuts per-workflow cost by roughly 60% compared to running everything through Opus while keeping output quality where it needs to be on the steps that matter.

If you're thinking about how to design that kind of routing from scratch, the AI agent ops playbook covers escalation and model routing patterns in more detail.

What Opus 4.7 changes for production AI agent workflows

The benchmark numbers are directionally useful, but they don't answer the practical question: what does this release change for teams already running agents?

Long-running research and RevOps agents

The cross-session memory improvement is the most operationally significant change for research and RevOps workflows. A team running a competitor intelligence agent that generates weekly briefs previously had to either maintain an external memory store and inject it at the start of each run, or accept that the agent would start fresh each session.

Opus 4.7's cross-session memory removes the re-injection step for supported memory patterns. The agent accumulates a working understanding of which competitors matter, which signals have already been flagged, and what the team's current priorities are -- without the operator manually restating it each run.

For a multi-agent research workflow, this compresses the setup overhead on each weekly run and reduces the risk that stale context leads to duplicate output.

Data pipeline and coding agents

The coding benchmark improvement is not academic for data engineering workflows. Pipeline agents that write and review dbt models, diagnose DAG failures, or generate migration scripts are doing exactly the kind of complex production coding work where Opus 4.7's gains are largest.

On a 93-task benchmark, four tasks could not be solved by Opus 4.6 or Sonnet 4.6. Those tasks represent the tail of hard problems -- edge cases in production codebases, ambiguous requirements with conflicting constraints, debugging problems with non-obvious root causes. In pipeline engineering, these are the tasks that most frequently require a senior engineer to step in. Opus 4.7 resolves more of them autonomously.

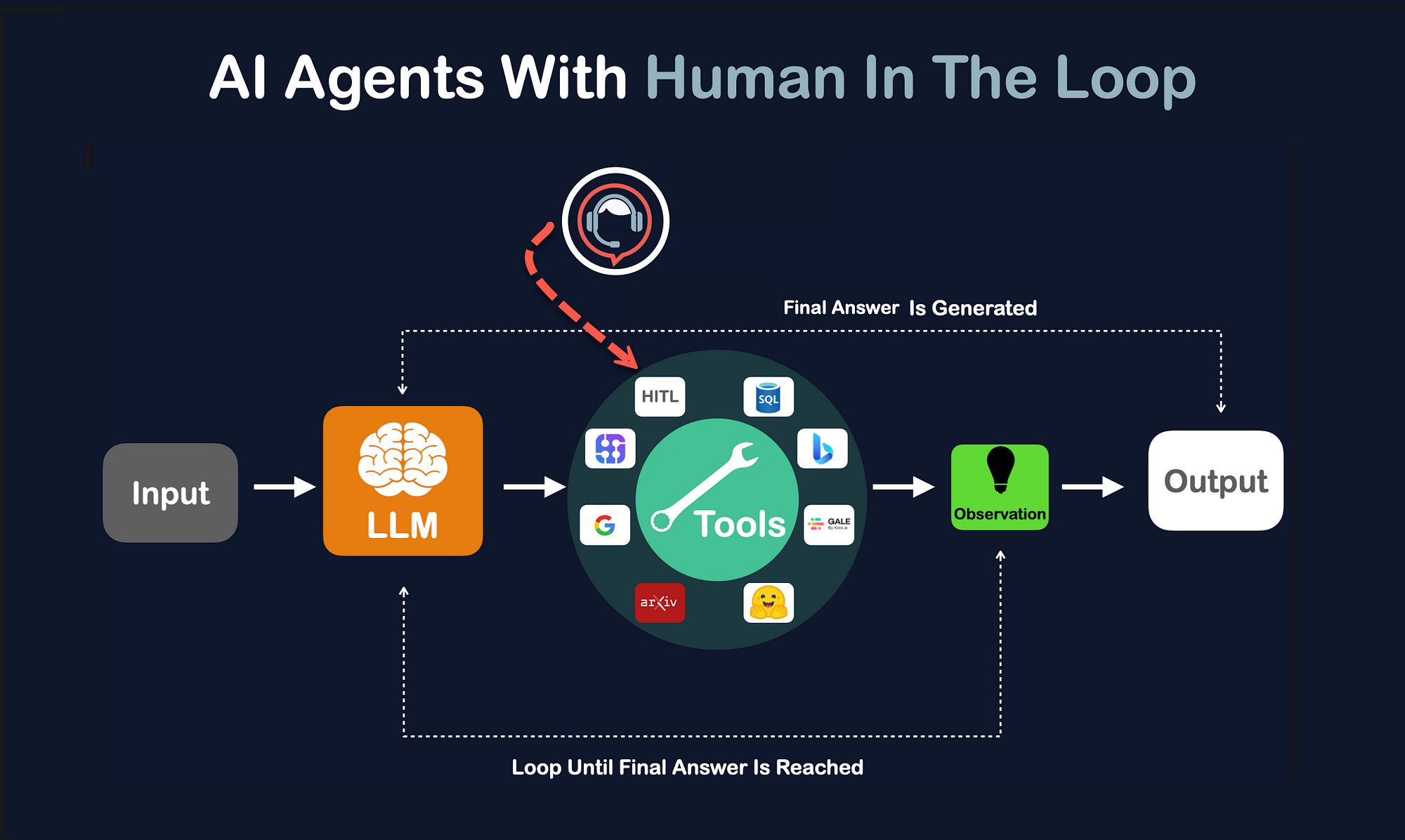

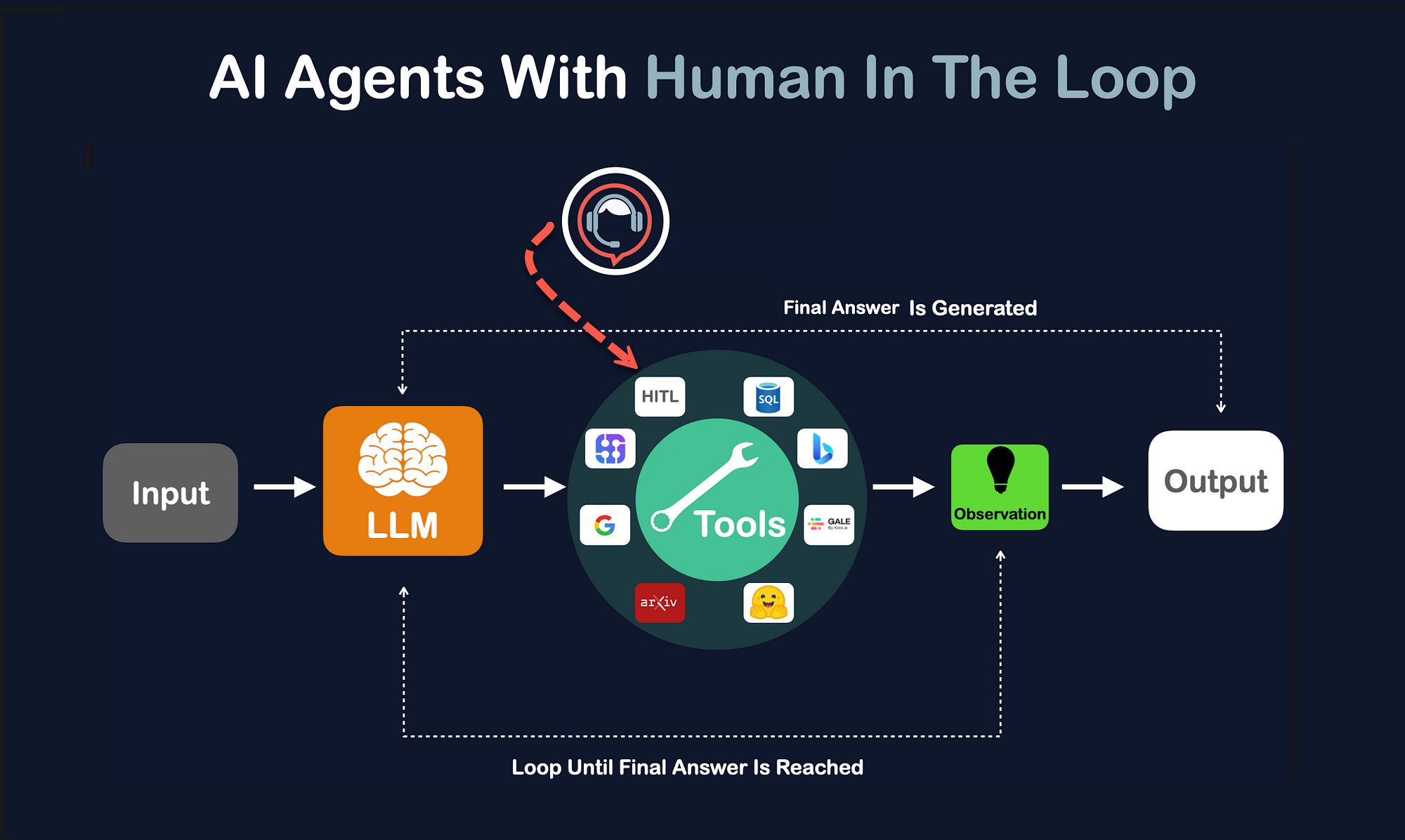

For production AI agent development, this changes the scope of what can be safely delegated to an agent step versus what needs to escalate to a human reviewer. Getting that boundary wrong in either direction -- too broad and humans become bottlenecks, too narrow and bad outputs escape -- is one of the more common sources of production agent failure. We covered how to calibrate that in AI agents with human review.

Cost discipline: prompt caching at $5/$25/M

Opus 4.7 is priced at $5 per million input tokens and $25 per million output tokens. This is unchanged from Opus 4.6. At face value, it's a premium price compared to Gemini 3.1 Pro at $2/$12.

Prompt caching changes the effective cost significantly. For agents with a large, stable system prompt -- instructions, tool schemas, policy documents, retrieval indexes -- Anthropic's prompt caching reduces the cost of re-sending that context by up to 90%. Batch processing adds another 50% reduction for non-latency-sensitive workflows.

In practice, a research agent with a 100,000-token system prompt running daily at 100 workflow completions per month sees input costs drop from roughly $50/month to $5/month on the cached portion. At that cost structure, the gap between Opus 4.7 and cheaper models narrows materially when caching is implemented correctly.

The caveat: prompt caching requires engineering discipline. Caches invalidate when system prompts change, so agents with frequently updated instructions see lower cache hit rates. Building cache-stable prompt architecture is worth the investment for any workflow running more than a few dozen completions per day.

How to get started: API access and pricing

Claude Opus 4.7 is available via the Claude API with model ID claude-opus-4-7. As of today, it's also live on Amazon Bedrock, Microsoft Azure (Foundry), and Vertex AI.

Pricing summary:

- Input: $5 per million tokens

- Output: $25 per million tokens

- Prompt caching: up to 90% reduction on cached input

- Batch processing: 50% reduction for offline workloads

- Context window: 1 million tokens

For teams evaluating whether to upgrade agents from Opus 4.6 to Opus 4.7: the price is the same, so the question is only whether the capability improvement justifies a model migration. Given the 13% coding benchmark lift and the cross-session memory improvement, workflows involving complex code generation or multi-session continuity are strong upgrade candidates. Workflows already performing well on Sonnet 4.6 should stay on Sonnet.

The takeaway

Claude Opus 4.7 is a production upgrade, not just a benchmark chase. The 13% coding lift, cross-session memory, and adaptive thinking all address real failure modes in production agent workflows -- not edge cases on synthetic evaluations.

The benchmark numbers matter less than the routing decision: most teams don't need Opus 4.7 on every agent step, and running it everywhere is a cost mistake. The teams that get the most from this release will identify the specific workflow steps where Opus 4.7's depth changes the output meaningfully, route those steps to the new model, and keep Sonnet where Sonnet is already sufficient.

If you're building or hardening a production AI agent and want a second opinion on where Opus 4.7 belongs in the workflow, see the AI agent development service or the AI support triage case study for a real production example of how model routing and human review work together under load.

Article FAQ

Questions readers usually ask next.

These short answers clarify the practical follow-up questions that often come after the main article.

Claude Opus 4.7 is Anthropic's most capable generally available model as of April 2026. It introduces adaptive thinking, cross-session memory, vision processing up to 2,576px, and a 13% lift on coding benchmarks over Opus 4.6. It is available via the Claude API with model ID claude-opus-4-7, as well as Amazon Bedrock, Azure, and Vertex AI.

The main functional differences are adaptive thinking, cross-session memory, higher vision resolution, and stronger coding performance. On SWE-bench Pro, Opus 4.7 scores 64.3% versus 53.4% for Opus 4.6. On Rakuten's production codebase benchmark, Opus 4.7 resolves three times as many tasks as Opus 4.6. Pricing is unchanged at $5/$25 per million tokens.

On the benchmarks most relevant to AI agent development -- SWE-bench Pro and production codebase resolution -- Opus 4.7 leads GPT-5.4 (64.3% vs 57.7% on SWE-bench Pro). For teams building agents that write, debug, or review production code, Opus 4.7 is the stronger choice based on current benchmarks.

Use Opus 4.7 for long-horizon reasoning, complex coding, high-stakes decision gates, and workflows that benefit from cross-session memory. Use Sonnet 4.6 for standard tool calling, structured extraction, and moderate-complexity agent steps where latency or cost matters.

$5 per million input tokens and $25 per million output tokens -- the same as Opus 4.6. Prompt caching reduces effective input cost by up to 90% for agents with stable system prompts. Batch processing adds another 50% reduction for non-latency-sensitive workflows.

Yes. Opus 4.7 launched on Amazon Bedrock on April 16, 2026, alongside general availability via the Claude API. It is also available on Microsoft Azure (Foundry) and Google Cloud Vertex AI.

Need a similar system?

If this article maps to a workflow your team already operates, the next step is usually a scoped review of the system, constraints, and rollout path.

Book your free workflow review here.

Related articles

View all

GPT-5.4 vs Claude Opus 4.7: Which Model for Production AI Agents?

AI Agent Development Services: What Changes Between a Prototype and Production

Human Review Loops for Production AI Agents