April 16, 2026

GPT-5.4 vs Claude Opus 4.7: Which Model for Production AI Agents?

GPT-5.4 leads on computer use (75% OSWorld). Opus 4.7 leads on agentic coding (64.3% SWE-bench Pro). The routing framework production teams actually use.

Article focus

Neither GPT-5.4 nor Claude Opus 4.7 wins in every situation. The production decision is not which model to pick -- it's which tasks go where.

Section guide

Neither GPT-5.4 nor Claude Opus 4.7 is the better model in every situation. GPT-5.4 leads on computer use and desktop automation, scoring 75% on OSWorld and exceeding human expert performance. Claude Opus 4.7 leads on agentic coding and complex writing, scoring 64.3% on SWE-bench Pro versus GPT-5.4's 57.7%. The production decision is not which model to pick -- it's which tasks go where.

Teams that route blindly to one model pay for capability they don't need on simple steps, or accept lower quality on the steps that matter most. Both models launched within six weeks of each other, both carry a 1M token context window, and both are available on the major cloud platforms. The differences that matter are narrower and more specific than most comparison articles suggest.

Key Takeaways

- Claude Opus 4.7 leads on agentic coding: 64.3% SWE-bench Pro vs GPT-5.4's 57.7%, and 3x more production task resolution on Rakuten's real-codebase benchmark.

- GPT-5.4 leads on computer use: 75% OSWorld, the first AI model to exceed human expert performance (72.4%) on desktop automation.

- GPT-5.4 standard API is cheaper at $2.50/$15 per million tokens vs Opus 4.7's $5/$25, but Claude's 90% prompt caching discount closes the gap for agents with stable system prompts.

- GPT-5.4 Pro pricing is $30/$180 per million tokens -- a different cost category entirely, appropriate for specific high-stakes use cases only.

- Most production agent architectures use both models, routing by task type rather than committing to one.

The benchmark comparison

Before the decision framework, the numbers. Both models score well across the board, but each has a clear lane.

| Benchmark | Claude Opus 4.7 | GPT-5.4 | Winner | |---|---|---|---| | SWE-bench Pro (agentic coding) | 64.3% | 57.7% | Claude | | SWE-bench Verified | 87.6% | ~77.2% | Claude | | OSWorld (computer use) | ~72.7% | 75.0% | GPT-5.4 | | Writing quality (blind eval, Q1 2026) | 47% preferred | 29% preferred | Claude | | Context window | 1M tokens | 1M tokens | Tie | | Standard input pricing | $5.00/M | $2.50/M | GPT-5.4 | | Standard output pricing | $25.00/M | $15.00/M | GPT-5.4 | | Prompt/cache savings | up to 90% | 50% (auto) | Claude | | Cross-session memory | Yes | Limited | Claude |

Sources: SWE-bench Pro Leaderboard, Scale AI and Anthropic Claude Opus.

The table tells a reasonably clean story. GPT-5.4 wins on desktop automation and standard API pricing. Claude Opus 4.7 wins on coding accuracy, writing quality, and workflows that need persistent memory. Neither model dominates across every dimension, which is exactly why production teams route across both.

Where GPT-5.4 wins: computer use and desktop automation

GPT-5.4's most significant capability is native computer use. It scores 75% on OSWorld -- a benchmark that measures an agent's ability to navigate desktop applications via screenshots and keyboard and mouse input -- and it's the first general-purpose model to exceed human expert performance, which sits at 72.4%.

This is not a plugin or a wrapper. Computer use is built into the model. In the API, GPT-5.4 can operate applications directly, issue Playwright commands, click interface elements, and handle multi-step workflows across standard desktop software.

Consider what this means for a data engineering team running UI-dependent processes. A pipeline that requires logging into a vendor portal, extracting a report, reformatting it, and pushing it downstream can now run as an agent step instead of a brittle script. The failure mode changes: instead of a selector breaking when the vendor redesigns their UI, the agent reads the screen and adapts.

GPT-5.4 also ships with Tool Search, a new API feature that lets agents use lightweight tool indices instead of passing full descriptions of every available tool in the system prompt. For agents connected to HubSpot, Zendesk, Slack, and internal APIs simultaneously, this reduces token overhead and improves tool selection accuracy.

Use GPT-5.4 for:

- RPA-style agents that operate desktop or web applications

- Browser automation workflows where the agent interacts with the UI rather than scraping HTML

- Agents managing large tool registries where Tool Search reduces system prompt overhead

- Computer use tasks where 75% OSWorld accuracy outweighs coding precision

If you're building a production AI agent development workflow that involves UI automation alongside data engineering steps, GPT-5.4 is likely the right model for the computer use layer.

Where Claude Opus 4.7 wins: agentic coding and writing

Claude Opus 4.7's strongest performance is on tasks that involve writing and reviewing production code, or producing complex written outputs where quality and instruction-following matter.

On SWE-bench Pro, Opus 4.7 scores 64.3% versus GPT-5.4's 57.7%. That 6.6-point gap is larger than it looks on a benchmark measuring real GitHub issues in production codebases -- not synthetic puzzles. On Rakuten's internal benchmark, which runs against actual production code, Opus 4.7 resolves three times as many tasks as Opus 4.6, with double-digit gains in both Code Quality and Test Quality. On a 93-task internal benchmark, four tasks that neither Opus 4.6 nor Sonnet 4.6 could solve were resolved by Opus 4.7.

The writing quality gap is also consistent. In blind human evaluations in Q1 2026, Claude-generated content was preferred 47% of the time versus 29% for GPT-5.4. For teams running research agents that produce analyst briefs or executive summaries, that preference gap shows up in real output quality.

Opus 4.7's cross-session memory changes what's practical for long-running workflows. A research agent tracking competitor activity across weeks, a support triage system that learns from prior cases, or a RevOps agent that accumulates understanding of deal patterns -- these all benefit from context that persists without manual re-injection. GPT-5.4 has limited support for this pattern; Opus 4.7 handles it natively.

A team running a multi-agent research workflow recently restructured their architecture after testing both models on brief generation. GPT-5.4 produced faster outputs on search steps. On the synthesis layer -- consolidating ten sources into a concise executive summary -- Opus 4.7 produced outputs the team trusted enough to send to leadership without editing. The routing decision made itself.

Use Claude Opus 4.7 for:

- Coding agents that write, review, or debug production code

- Research agents that synthesize sources into polished written output

- Long-running workflows that benefit from cross-session memory

- Complex decision gates where output quality has downstream business consequences

- Agentic pipelines involving dbt models, DAG generation, or migration scripts

The AI agent ops playbook covers how to design escalation and confidence routing once the base model selection is working.

The pricing math: GPT-5.4 is cheaper, until it isn't

GPT-5.4 standard API pricing is $2.50 per million input tokens and $15 per million output tokens. Claude Opus 4.7 runs $5/$25. At face value, GPT-5.4 is 50% cheaper on input and 40% cheaper on output.

Two things shift this comparison.

First, prompt caching. Anthropic's prompt caching reduces input costs by up to 90% for agents with large, stable system prompts. A research agent with a 100,000-token system prompt -- tool schemas, instructions, policy documents, retrieval context -- sees the effective input cost on that cached portion drop from $0.50 per run to $0.05. At 100 workflow completions per month, that's $50/month versus $5/month on the cached portion alone. GPT-5.4's 50% automatic cache discount is useful, but Claude's 90% ceiling changes the math for well-architected agents running at volume.

Second, GPT-5.4 Pro. For maximum-performance use cases, OpenAI offers GPT-5.4 Pro at $30/$180 per million tokens. That's six times the standard input price and twelve times the standard output price. At that cost, it's a specialized tool for specific high-stakes tasks -- not a default model for production agent workflows.

The practical guidance: run the math on your specific system prompt size and call volume before assuming GPT-5.4 is materially cheaper at scale. For agents with well-engineered, stable system prompts, the cost gap under caching is smaller than the sticker price suggests.

The production routing framework

Most teams that have shipped production agents are not running a single model. They route by task type, using the model best suited to each step.

A research agent architecture that reflects current best practices:

Planning and task decomposition -- Claude Opus 4.7. Long-horizon reasoning, accurate decomposition of ambiguous briefs, and cross-session memory on prior research make this the right model for the step that sets up everything downstream.

Search and retrieval steps -- Sonnet 4.6 or Haiku 4.5. Straightforward classification and ranking at lower cost per call.

Computer use steps (portal access, UI navigation, screen-based data extraction) -- GPT-5.4. Native computer use at 75% OSWorld accuracy handles these steps more reliably.

Code generation and review -- Claude Opus 4.7. The SWE-bench Pro gap is consistent enough to trust it on production code tasks.

Final brief or report synthesis -- Claude Opus 4.7. Writing quality and instruction-following produce outputs that require less editing before delivery.

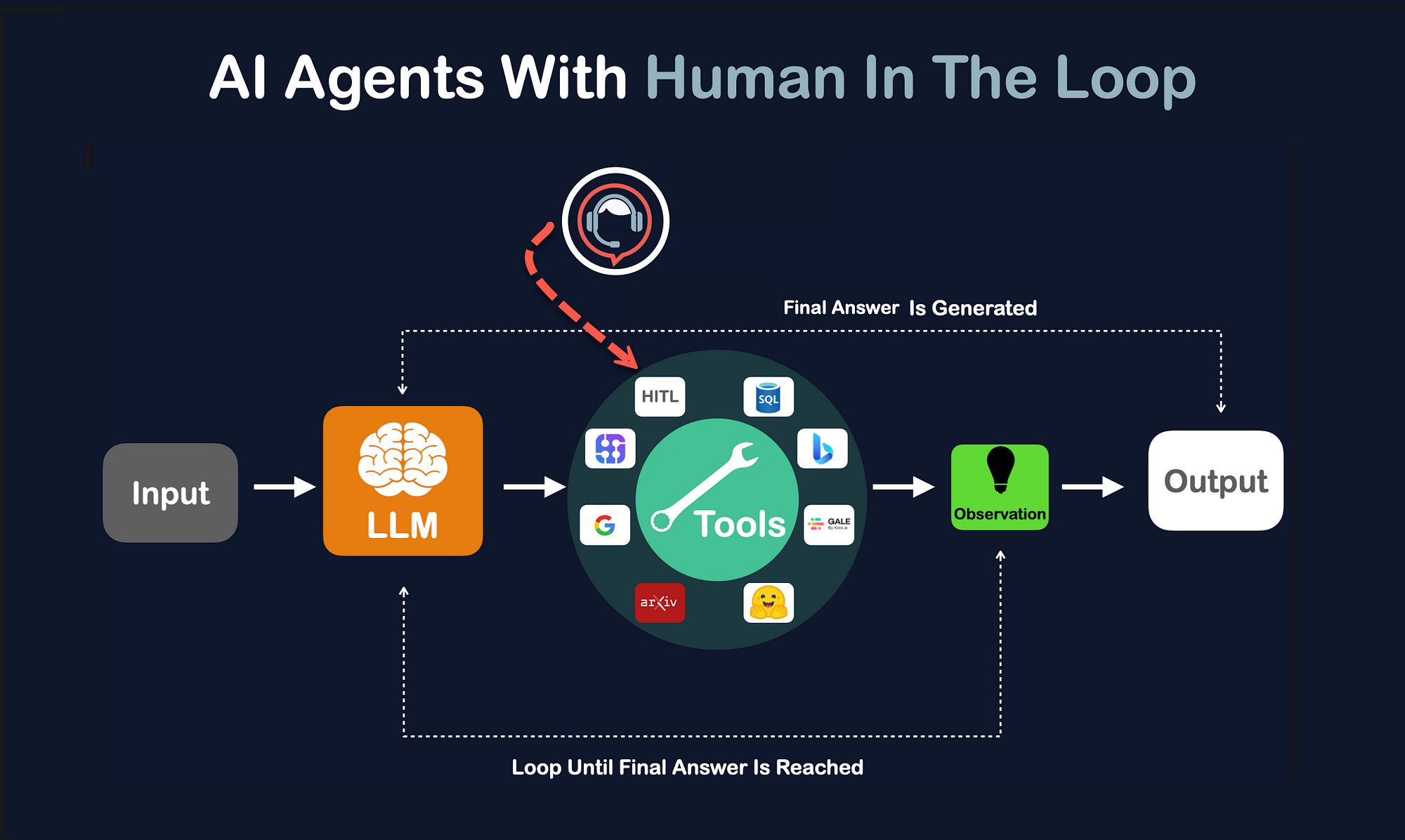

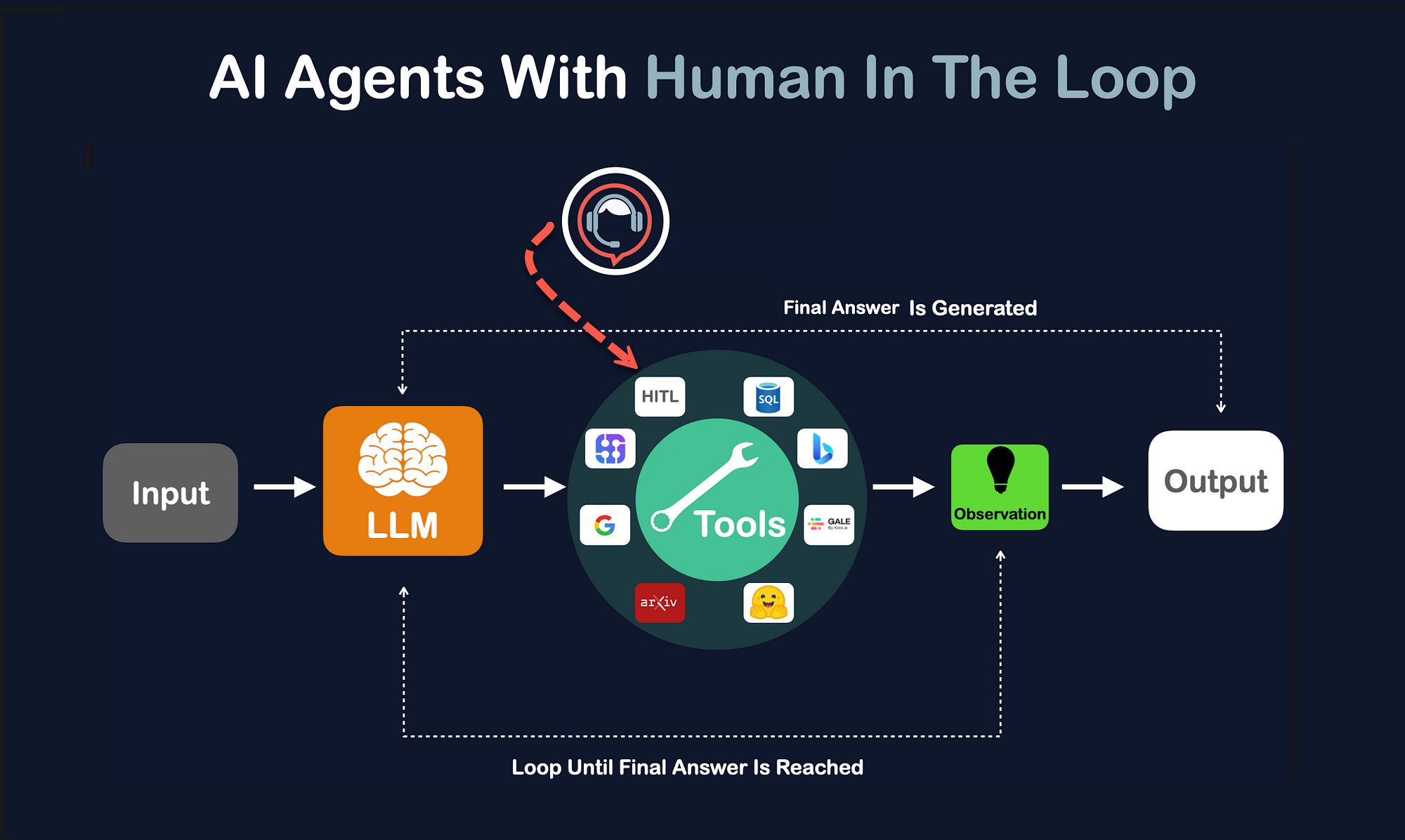

The architecture question is not "GPT-5.4 or Claude Opus 4.7?" It's "which model handles each step well enough to trust the output, at what cost, and where does a wrong answer have material downstream consequences?" Placing human review at those consequence points -- rather than at every step -- keeps the architecture lean without sacrificing reliability. We covered that calibration in AI agents with human review.

This routing pattern also has a practical implication for teams starting fresh: don't lock your agent framework to one model provider. LangGraph, CrewAI, and the Anthropic SDK all support multi-model routing. Building that flexibility in from the start costs almost nothing and avoids a painful refactor when the next model release shifts the benchmark landscape again.

The takeaway

The GPT-5.4 vs Claude Opus 4.7 comparison is not a winner-takes-all question. GPT-5.4 made a real advance on computer use and desktop automation. Claude Opus 4.7 made a real advance on agentic coding and cross-session memory. Both run on a 1M token context window, both are available on the major platforms, and both are priced for production use.

The teams that get the most from either release will treat model selection as a routing architecture decision rather than a vendor preference. Pick the model that handles each step reliably enough to trust its output at the cost you can justify -- and build in the flexibility to change that assignment when the next release shifts the benchmark.

If you're scoping a production AI agent and want a second opinion on how to architect the model routing layer, see the AI agent development service or the AI support triage case study for a production example of multi-model routing under real load.

Article FAQ

Questions readers usually ask next.

These short answers clarify the practical follow-up questions that often come after the main article.

It depends on the task. GPT-5.4 leads on computer use (75% OSWorld, exceeding human expert performance). Claude Opus 4.7 leads on agentic coding (64.3% SWE-bench Pro vs 57.7%), writing quality, and cross-session memory. Neither model dominates across every dimension; production teams route to each based on task type.

For agentic coding, data pipeline agents, and writing-intensive workflows: Claude Opus 4.7. For desktop automation, UI-dependent workflows, and agents managing large tool ecosystems: GPT-5.4. For most standard tool calling and extraction steps: Sonnet 4.6 or a comparable mid-tier model. Most production architectures use more than one model.

At sticker price, yes: $2.50/$15 versus $5/$25 per million tokens. But Claude Opus 4.7's prompt caching reduces effective input costs by up to 90% for agents with stable system prompts. For well-architected agents running high volumes, the practical cost gap is smaller than the headline pricing suggests. GPT-5.4 Pro is $30/$180 -- a very different cost category.

Claude Opus 4.7. It scores 64.3% on SWE-bench Pro versus 57.7% for GPT-5.4, and resolves 3x more tasks on Rakuten's production codebase benchmark. The gap is consistent across benchmarks measuring real code quality rather than synthetic tasks.

Yes. GPT-5.4 scores 75% on OSWorld and is the first general-purpose model to exceed human expert performance on desktop automation. Computer use is native to the model, not a plugin. Claude Opus 4.7 scores approximately 72.7% on the same benchmark.

Yes, and most production teams do. LangGraph, CrewAI, and other agent frameworks support routing across multiple model providers. The standard pattern is to select a model per step based on what that step requires -- routing computer use to GPT-5.4 and code generation or synthesis to Claude Opus 4.7 within the same workflow.

Need a similar system?

If this article maps to a workflow your team already operates, the next step is usually a scoped review of the system, constraints, and rollout path.

Book your free workflow review here.

Related articles

View all

Claude Opus 4.7: What Changed and When to Use It for Production AI Agents

AI Agent Development Services: What Changes Between a Prototype and Production

Human Review Loops for Production AI Agents