April 5, 2026

Resilient Web Scraping Pipelines with Monitoring and Fallbacks

How to build scraping systems that survive dynamic targets, proxy failures, selector drift, and downstream delivery issues without turning into constant fire drills.

Article focus

Most scraping failures do not come from the parser alone. They come from weak runtime design around browser behavior, retry policy, proxy health, and downstream validation.

Section guide

Most scraping failures do not come from the parser alone.

They come from weak runtime design around browser behavior, retry policy, proxy health, and downstream validation.

Think in terms of a collection system, not a script

If the data source is dynamic, protected, or high value, a one-off script is rarely enough.

You need a collection system with:

- browser automation

- session and credential handling

- proxy strategy

- retries and backoff

- output validation

- downstream delivery checks

That is the difference between a weekend script and a Web Scraping & Automation workflow that can survive production conditions.

Monitor more than success or failure

A scraper can technically "succeed" while still returning low-value data.

That is why strong monitoring should include:

- response and page-load failure rates

- selector miss frequency

- proxy pool health

- extraction completeness

- downstream schema validation

When teams only track job success, they miss the quiet degradations that destroy trust later.

The quiet failure problem

Quiet failures are the most expensive ones.

The job still finishes, but:

- a price field turns blank

- a listing count drops unexpectedly

- a location selector starts returning the wrong region

- a downstream warehouse table fills with partial records

Monitoring should surface these signals before the business finds them the hard way.

Design fallbacks before the site changes

Fallback logic is much easier to add before the first incident.

Common fallback layers include:

- alternate selectors for high-value fields

- browser restarts after repeated instability

- proxy pool switching by geography or risk level

- delayed retry windows when the target starts throttling

- partial delivery paths that quarantine suspect data

These fallbacks let the system degrade gracefully instead of collapsing all at once.

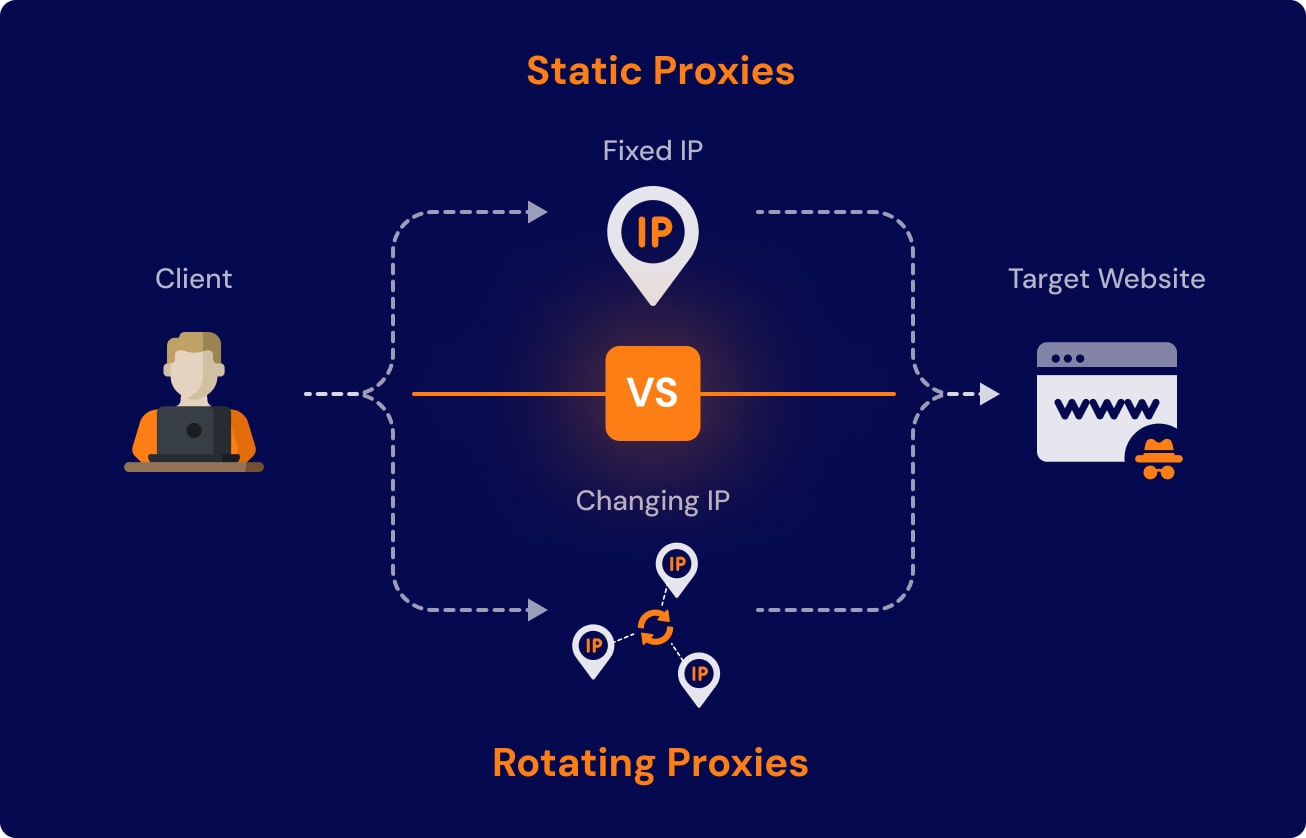

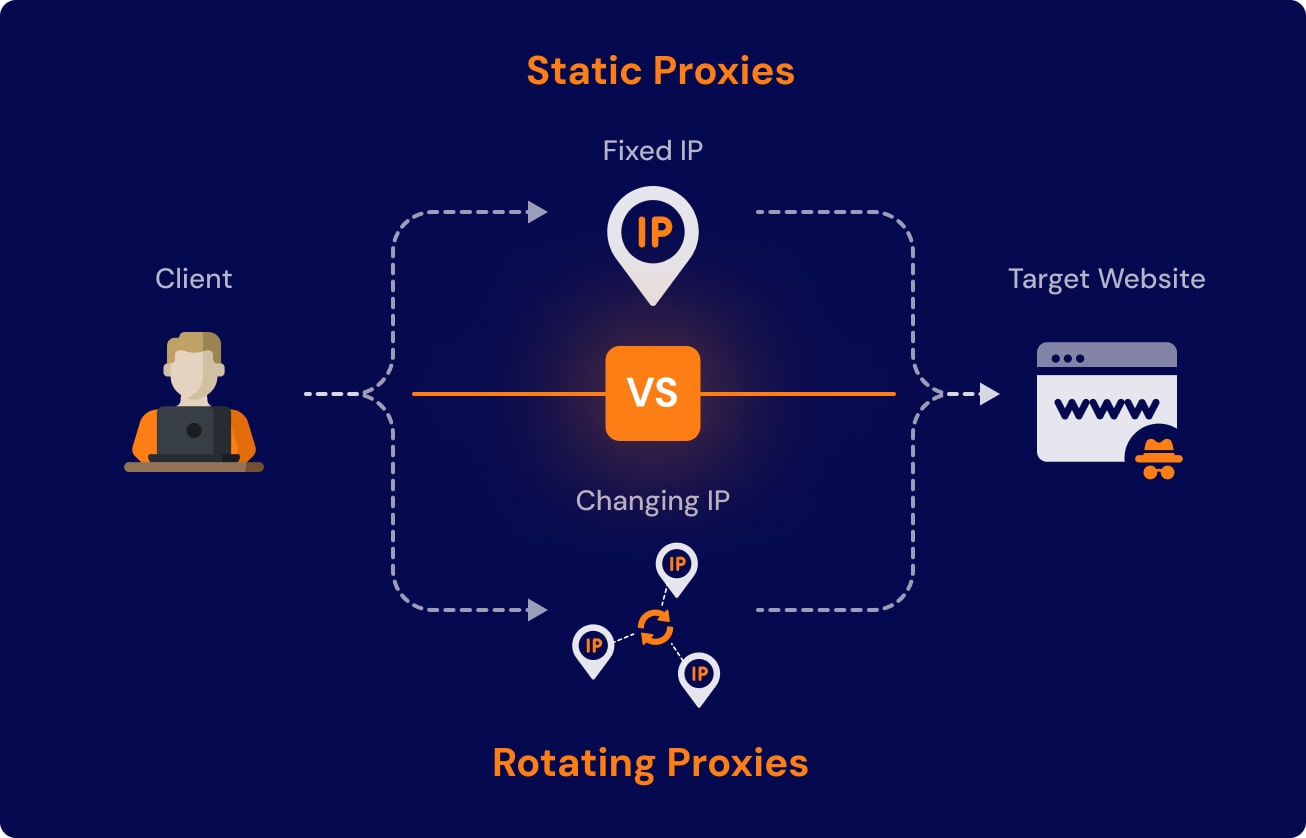

Treat proxy behavior as a first-class signal

Proxy strategy is not a side concern for hard targets.

You want visibility into:

- which pool is generating the highest failure rate

- where CAPTCHAs spike

- which geographic exits work best for a target

- how retry behavior changes by target segment

Without that visibility, teams keep debugging selectors when the real issue is network reputation or session instability.

Validate the output where it lands

A resilient scraping pipeline does not stop at extraction.

Once the data lands in a warehouse, API, or operational tool, validate:

- required fields

- type expectations

- row-count changes

- duplication patterns

- freshness windows

The downstream contract matters because the business only sees value when the extracted data is usable, not merely collected.

Keep incident recovery simple

When a scraper breaks, the team needs a recovery path that is easy to understand.

That usually means:

- clear alerts with useful context

- visible runtime logs

- replay support for missed windows

- documented rollback or pause conditions

If recovery depends on a single engineer reverse-engineering the whole system from memory, the runtime is still too fragile.

The takeaway

Production scraping resilience comes from runtime design, not from extraction logic alone.

If you treat browser automation, proxy health, monitoring, fallbacks, and downstream validation as one system, the workflow can keep delivering even when the target gets harder to scrape.

Article FAQ

Questions readers usually ask next.

These short answers clarify the practical follow-up questions that often come after the main article.

Selector drift, session handling, anti-bot pressure, and weak monitoring usually break before the extraction logic itself. That is why runtime design matters as much as parsing.

A successful one-time run does not prove the system can survive changing targets. Monitoring and fallbacks make the scraper operable when the site, network, or proxy layer shifts.

Need a similar system?

If this article maps to a workflow your team already operates, the next step is usually a scoped review of the system, constraints, and rollout path.

Book your free workflow review here.

Related articles

View all

Web Scraping Automation for Protected Sites: What Actually Keeps Collection Stable

Scaling Playwright Scraping with Proxies, Retries, and Fewer Nightly Failures

Optimizing Docker Image Build Times: A Practical Guide for Production Teams