January 16, 2026

Scaling Playwright Scraping with Proxies, Retries, and Fewer Nightly Failures

A practical operating guide for Playwright scraping systems that need to stay stable under anti-bot pressure and changing page behavior.

Article focus

Stable scraping systems are built around fallback paths, retry logic, and operational visibility, not only around browser automation scripts.

Section guide

Most scraping failures do not happen because Playwright is the wrong tool. They happen because the surrounding operating model is too thin.

If the system depends on a single browser path, one proxy provider, and weak retry logic, it will feel stable right up until the first serious target change.

Design for instability from the beginning

Protected sites change. DOM structure changes. Network behavior changes. Rate limits shift. The scraping architecture should assume those things will happen regularly.

That means planning for:

- selector breakage

- intermittent blocking

- degraded proxy pools

- captchas or challenge pages

Retries should be selective

Blind retries often make failures worse. A better retry strategy separates:

- navigation failures

- extraction failures

- anti-bot responses

- upstream timeout conditions

Each type should have a different recovery path. Otherwise the system keeps repeating the same broken action.

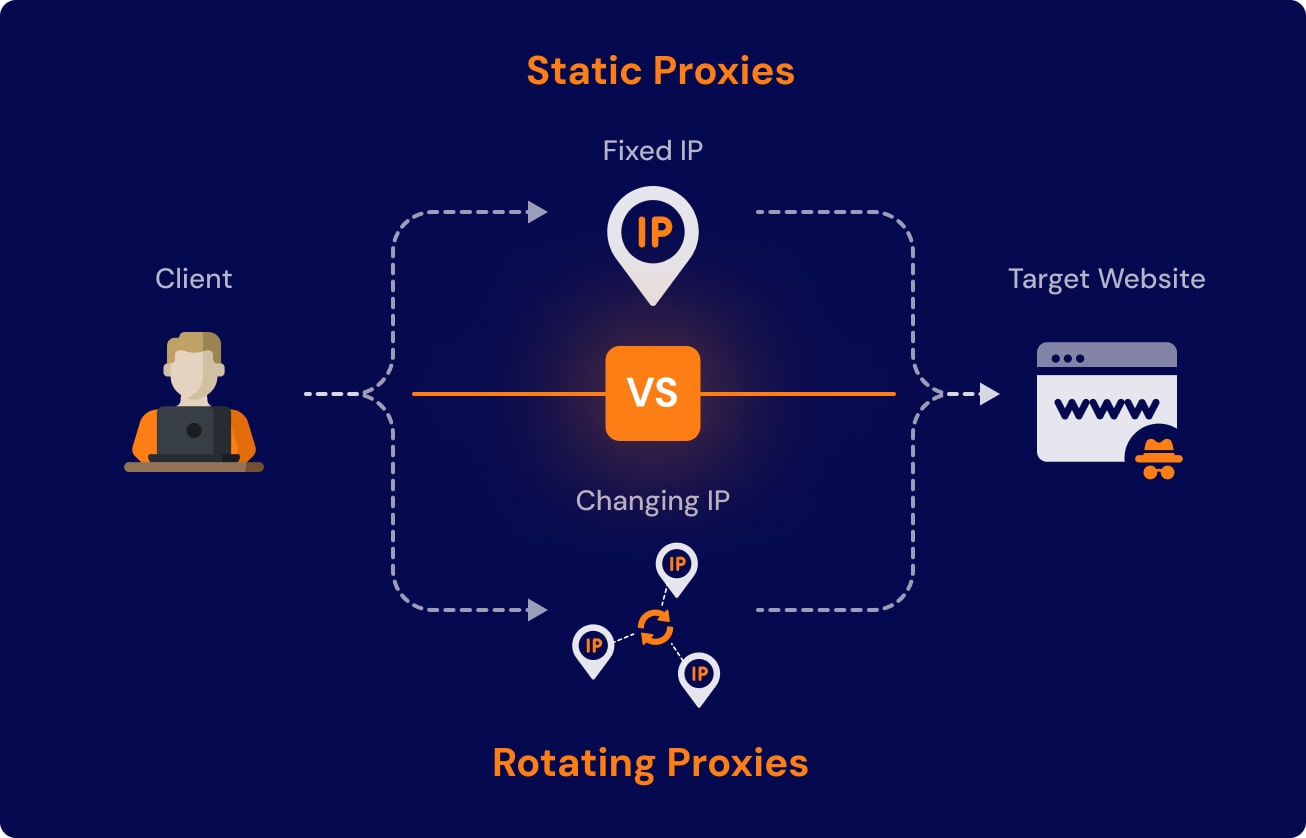

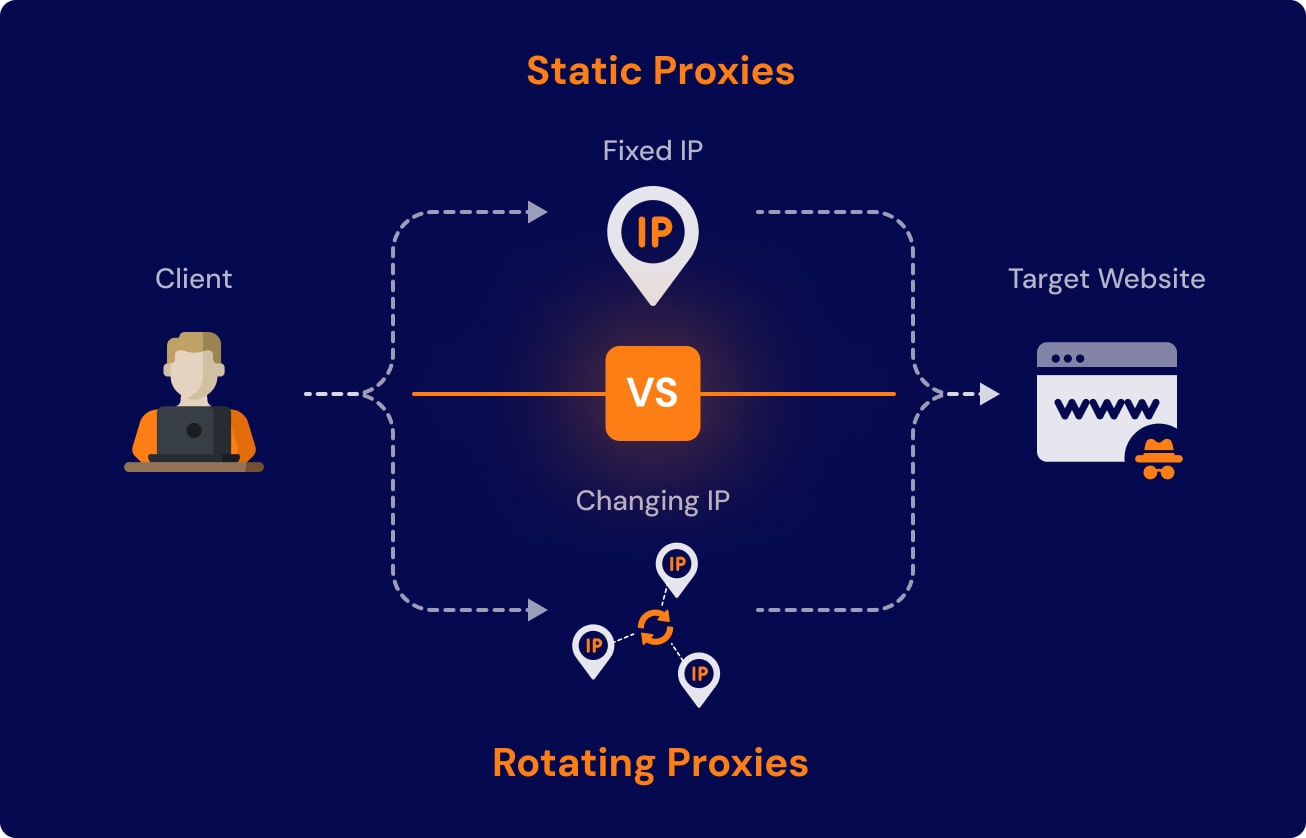

Proxies are an operating layer, not a checkbox

A proxy provider is only part of the picture. Teams also need routing logic, health checks, and visibility into which targets or geographies are actually failing.

Useful questions include:

- which proxy pools fail most often

- which targets need residential traffic

- when to rotate sessions versus identities

- which retries burn budget without improving success

Treat extraction as a product surface

The browser gets you to the page. Extraction quality determines whether the result is useful.

A stronger system validates:

- required fields

- schema shape

- suspicious empty states

- duplicate records after retries

Without those checks, a scraper may look green while quietly returning junk.

Add operator visibility early

The most valuable scraping dashboards show:

- success rate by target

- retry counts

- proxy error breakdown

- challenge-page detection

- data quality exceptions

Operators should know whether the problem is browser behavior, target response, network routing, or extraction logic.

The takeaway

Scaling Playwright scraping is less about writing clever browser code and more about building a resilient operating system around it.

The stable teams win because they design for breakage, measure recovery, and make extraction quality visible every day.

Article FAQ

Questions readers usually ask next.

These short answers clarify the practical follow-up questions that often come after the main article.

They usually fail because the operating model is too thin: one browser path, weak retry strategy, limited proxy controls, and poor visibility into changing target behavior.

A strong proxy layer includes routing logic, health checks, visibility by target and geography, and clear rules for when to rotate sessions, identities, or providers.

Need a similar system?

If this article maps to a workflow your team already operates, the next step is usually a scoped review of the system, constraints, and rollout path.

Book your free workflow review here.

Related articles

View all

Resilient Web Scraping Pipelines with Monitoring and Fallbacks

Web Scraping Automation for Protected Sites: What Actually Keeps Collection Stable

Optimizing Docker Image Build Times: A Practical Guide for Production Teams